The consistency of shared-bus protocols is shown to be naturally stronger than that of non-bus protocols.The first protocol of its kind is presented for a large hierarchical multiprocessor, using a bus-based protocol within each cluster and a general protocol in the network connecting the clusters to the shared main memory. Coherence is compared and contrasted with other levels of consistency, which are also identified. All new protocols have been proven correct one of the proofs is included.Previous definitions of cache coherence are shown to be inadequate and a new definition is presented. A new class of protocols is presented that offers reduced implementation cost and expandability, while retaining a high level of performance, as illustrated by simulation results using a crossbar switch. The simulation model and parameters are described in detail.Previous protocols for general interconnection networks are shown to contain flaws and to be costly to implement. In each category, a new protocol is presented with better performance than previous schemes, based on simulation results. In invalidation protocols all other cached copies must be invalidated before any copy can be changed in distributed write protocols all copies must be updated each time a shared block is modified. Keywords: Shared-memory multiprocessor, Write-update cache coherence protocols, Relaxed memory consistency models, Lockup-free cache design, Performance. Most shared-memory multiprocessors employ the cache hierarchy to reduce the time needed to access memory by keeping data values as close as possible to the processor that uses them. Although the distinction between these machines is. When a task running on a processor P requests the data in memory location X, for example, the contents of X are copied to the cache, where it is passed on to P.

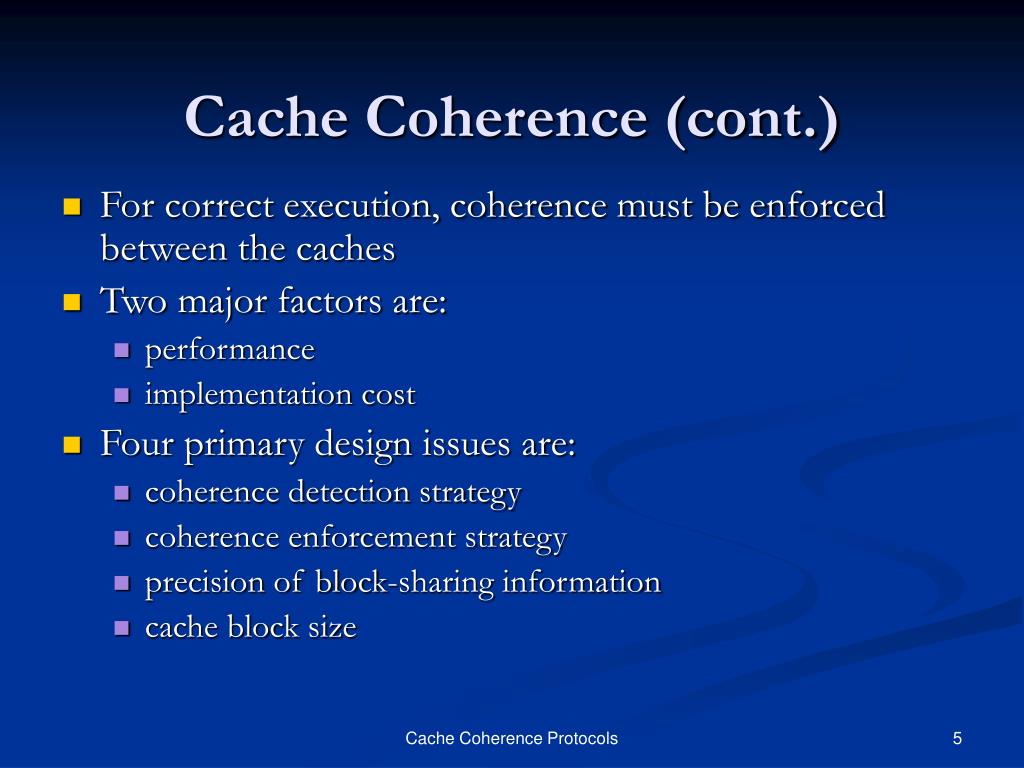

Previously proposed shared-bus protocols are described using uniform terminology, and they are shown to divide into two categories: invalidation and distributed write. A shared memory multiprocessor can be considered as a compromise between a parallel ma-chine and a network of workstations. 3.1- CacheMemory Coherence In a single cache system, coherence between memory and the cache is maintained using one of two policies: (1) write-through, and (2) write-back. This dissertation explores possible solutions to the cache coherence problem and identifies cache coherence protocols-solutions implemented entirely in hardware-as an attractive alternative.Protocols for shared-bus systems are shown to be an interesting special case. The cache coherence problem is keeping all cached copies of the same memory location identical. The cache coherence problem is keeping all cached copies of the same memory location identical. The cache coherence problem in shared-memory multiprocessors Adding a cache memory for each processor reduces the average access time, but it creates the possibility of inconsistency among cached copies. though the number of processors on a single-bus-based shared-memory multi- processor is limited by the bus bandwidth, large caches with efficient coherence. Adding a cache memory for each processor reduces the average access time, but it creates the possibility of inconsistency among cached copies. However, sharing memory between processors leads to contention which delays memory accesses. Shared-memory multiprocessors offer increased computational power and the programmability of the shared-memory model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed